Table of Contents

HA on $40/month: No load balancer, no K8s, just DNS and SSH tunnels

2026-03-17 | Arjoonn S | Sethu Vishal S

How we run an API serving 200k requests/day across multiple nodes for ~$40/month ; no load balancer, no central coordinator, just cooperative clients, local read replicas, and SSH-tunneled writes.

Three VPS machines at $12/month each. Two app nodes, one PostgreSQL primary. No central load balancer. Clients decide which node to talk to. ~220k API requests/day, spiky peaks hit 50 req/sec in short bursts, 600 req/min over a minute, 20k req/hr over an hour. Graceful read only degradation when the primary goes down. Total cost: ~$40/month including S3 backups.

How We Got Here

We didn't design this upfront. Each stage was forced by a concrete failure over a period of a few years.

Single machine

Django + SQLite on one VPS. Deploys were git pull && systemctl restart gunicorn. Worked fine while the serup to a few thousand requests/day.

Multiple gunicorn workers writing simultaneously produced database is locked errors under traffic bursts. WAL mode helped but didn't fix it fully. We migrated to PostgreSQL on the same machine.

App and DB fight over RAM

Past ~80k requests/day, gunicorn workers and PostgreSQL were competing for memory and CPU on a small machine. Traffic is spiky, average throughput looks modest but peaks hit ~50 req/sec, which is enough to cause worker pileups during slow queries. We split app and DB onto separate machines.

One app server means every restart is downtime, and a hardware failure means ~20-40 minutes offline while a replacement provisions on the cloud. At 200k requests/day that's a real cost. We added a second web node.

Db was still on one machine. When that went down, everything went down. So we added a managed DB cluster. To manage costs we reduced the API machine to a single one. This worked well.

Growing expense

With a beefy API server and a Linode managed PostgreSQL cluster (3 node HA) the 3 node cluster alone cost ~$180/month. Total with the API node pushed the bill past $200/month ; prohibitive for our scale. We cannot simply drop it and switch to a self hosted primary on a budget VPS. That would reintroduce the SPOF problem.

In this time, we also had a datacenter fire, and multiple AWS downtime events all of which took down our service, making us think of multi cloud to make sure the service does not go down.

That's where we are now. The rest of this post explains the current architecture in detail.

The Architecture

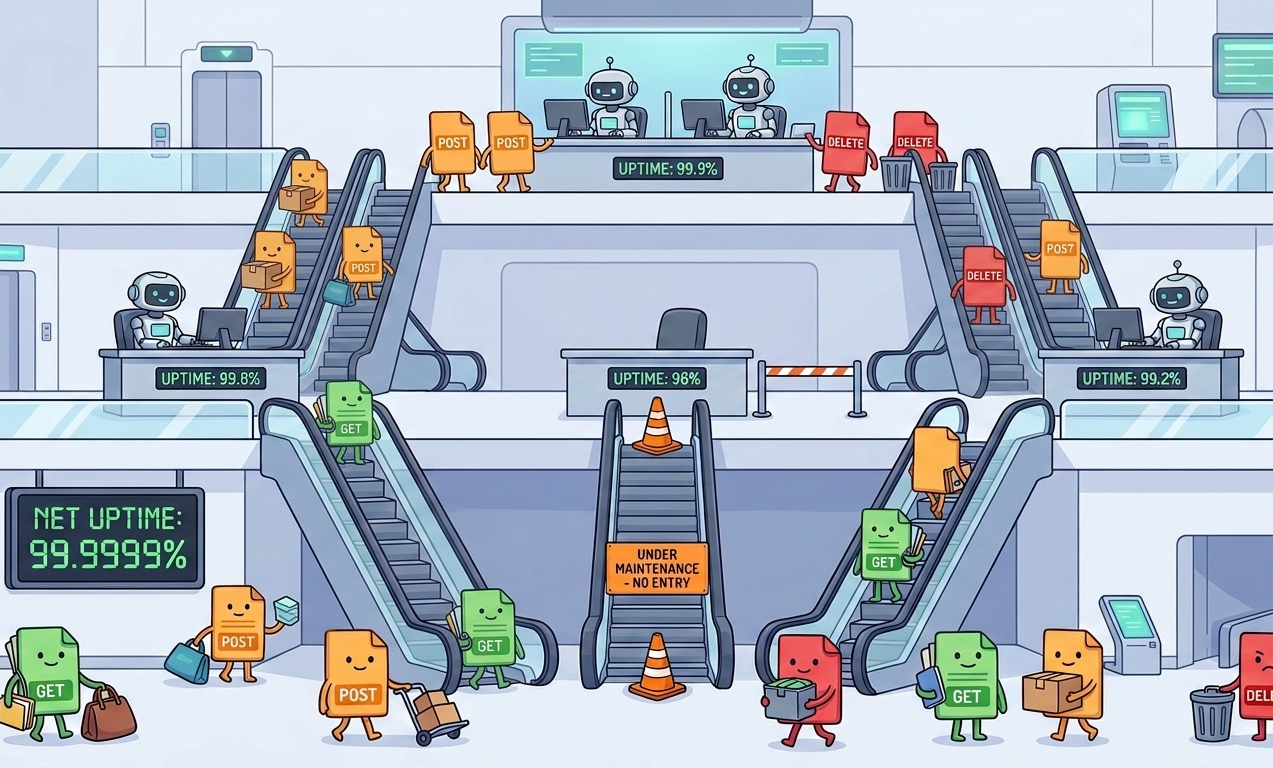

Each web node (api1, api2, ...) is an independent VPS, potentially across different cloud providers. There is no load balancer in front of them. Clients know about each node by name and choose which one to call themselves.

Each node runs:

- Caddy reverse proxy, automatic TLS per node

- Django + gunicorn application server

- PostgreSQL read replica local, serves all read traffic

- Redis local cache and rate limit state

- Mole persistent SSH tunnel to the primary DB node (terminates at a hardened SSH sidecar on the DB host)

On the DB node we run PostgreSQL primary alongside a hardened SSH tunnel sidecar container that shares the same Docker network as the primary. The DB host itself does not accept SSH connections; all tunnels terminate at the sidecar, which forwards into the private Docker network.

Reads are served from the local replica. Writes go through the Mole tunnel, terminate at the sidecar on the DB node, and are forwarded directly to the primary. The primary is never exposed to the public internet.

Cooperative Client Load Balancing

This is the most important design decision, and the most unusual one. There is no central load balancer or DNS round robin deciding which node a client hits. Each downstream application ships with knowledge of all named API endpoints (api1.example.com, api2.example.com, ...) and picks one itself based on:

- Latency the nearest node wins, naturally achieving geographic affinity without any central authority.

- Health if a node returns an error or a

429 Too Many Requests, the client backs off from that node for a while and prefers the others.

Our client side algorithm: jittered round robin weighted by latency derived health scores stored in Redis. We track per node moving latency and recent error/429 rates; selection adds jitter to avoid herds and decays weights over time so nodes recover smoothly.

Other algorithms we could choose depending on workload at some point in the future:

- Power of two choices (P2C) with latency or in flight weight

- EWMA-based latency weighting

This makes failures self healing from the client's perspective. If api1 and api2 go down, clients automatically route to api3-api10 with no DNS change required. There's no TTL to wait out, no health check propagation delay, no coordinator that can become a single point of failure.

A common question here: isn't the client SDK itself a coordinator? Yes, in a sense. The routing logic lives in the SDK rather than on a central server. The difference is that the downstream clients are all systems we control and deploy, so we can update the SDK on our own schedule. More importantly, the coordination cost is distributed and each client handles routing for its own traffic slice rather than one box handling all of it. We've also had a central HAProxy go down before, which caused a full outage. This approach removes that failure mode entirely.

Another question: how do clients discover new nodes? They don't, automatically. We keep nodes pre provisioned and named (api1 through api10). Under normal conditions only a few are active. When we need more capacity, we bring up one of the spare numbered nodes. Clients already have that hostname baked in and will start routing to it once it responds. Emergency scaling is vertical (upgrading existing active machines), which doesn't require any client change at all.

The SSH Tunnel (Mole)

Mole is a lightweight CLI tool that establishes and maintains a persistent SSH tunnel. We use it to forward the PostgreSQL port from each web node to the primary DB node. Each web node's Django app sees the primary as localhost:5433 ;no VPN, no firewall rules, no cloud provider VLAN.

Isolation details: the SSH server we connect to is a separate hardened container on the DB host, on the same Docker network as pg_main. The host and the Postgres container do not accept SSH themselves; all tunnels terminate at the sidecar and forward into the private Docker network. This allows strict network policy while keeping the primary unexposed.

We considered alternatives:

- Tailscale we actually tried this. Frequent fallbacks to DERP relay servers caused enough extra latency that we dropped it. Tailscale is supposed to use direct P2P connections between servers, but in practice we saw relay usage often enough to matter.

- Cloud provider VLAN ties us to a single provider, which breaks multi cloud redundancy.

- Direct PostgreSQL port forward exposes the DB port to the public internet.

SSH is simple, auditable, and universally understood. Mole runs as a systemd service with Restart=always. If the tunnel drops, it reconnects within seconds. A dropped tunnel means that node can't replicate or write until it reconnects reads from the local replica continue uninterrupted.

PostgreSQL streaming replication uses a persistent connection through the tunnel. When the tunnel bounces, the replica detects the lost connection and automatically re establishes the WAL stream once Mole reconnects. No manual intervention needed.

Read/Write Splitting and Graceful Degradation

Our API traffic is overwhelmingly read heavy. Django's DB router sends all reads to the local replica and all writes to the primary (via the Mole tunnel). The configuration looks like:

DATABASES = {

'default': { # primary - writes only (via Mole tunnel to the DB node)

'ENGINE': 'django.db.backends.postgresql',

'HOST': 'localhost',

'PORT': '5433', # Mole tunnel

...

},

'replica': { # local replica - reads

'ENGINE': 'django.db.backends.postgresql',

'HOST': 'localhost',

'PORT': '5432',

...

},

}

If the primary goes down, reads keep working from the local replica. Only write endpoints start returning errors. This is graceful degradation , most of our API surface stays available without any special failover logic. The degradation is a natural consequence of the routing, not an engineered mode we switch into.

One sharp edge: read-your-own-writes. If a client writes something and immediately reads it back, replication lag means it might not see the write yet. Lag can reach up to 2 seconds on multi continent connections between replica and primary. Any endpoint where this matters must explicitly use using('default') to force a read from the primary. Easy to forget; worth having a test or lint rule for.

We also use django-knox for auth tokens. Knox stores tokens in the DB, which gets replicated to all nodes so a user who authenticates against api1 is eventually valid on api2 as well, with no shared session store needed. The replication lag window is the same as above.

Rate Limiting

Each node rate limits independently using its local Redis. This is intentional: different nodes can serve different traffic volumes due to differences in cloud providers / machine specs etc (we are pinning the cost per machine, not the machine spec), and our workload is almost entirely read only, so there's no correctness issue with clients getting more aggregate throughput by using multiple nodes.

Abuse prevention works differently. We use a rotating secret User-Agent allowlist, backed by simple, slow rotating secrets so we can gate "unknown" traffic quickly. This is the first of several layers we apply to prevent abuse; unrecognized traffic hits a low catch all rate limit.

Conclusion

Cost Breakdown

| Component | Provider | Spec | Rough Cost / month | Notes |

|---|---|---|---|---|

api1 |

Linode | 1 vCPU, 2 GB RAM | $12 | Caddy, Django, PG replica, Redis |

api2 |

E2E Networks | 2 vCPU, 6 GB RAM | $12 | Identical setup, different provider |

| DB primary | Contabo | 8 vCPU, 24 GB RAM | $12 | PG primary; SSH tunnel sidecar; not public facing |

| S3 backups | <$5 | Hourly pg_dump | ||

| Total | ~$40 | Adding a node costs $12/month |

The specs look mismatched on paper ; a 1 core app node and a 24 GB DB node, all for the same price. That's just how budget VPS pricing works across providers. Contabo's ~$12 tier is unusually generous on resources; Linode's is not. The DB node is intentionally bigger: PostgreSQL benefits from having room to keep working sets in memory, and a beefy enough primary means it won't become the bottleneck for a long time.

The obvious question is whether $40 is justified for 220k requests/day. Purely on traffic, no ; this load is manageable on a single $10 VPS. Anyone who says otherwise is either running expensive queries or has under specced hardware. The cost is not buying throughput. It's buying the ability to deploy without downtime, keep reads alive when the primary dies, lose a full node or an entire provider without an incident, and sleep through most failure scenarios. On managed infrastructure, equivalent properties cost $200-400/month: RDS Multi AZ alone starts costing $100+ before any app redundancy.

What Works Well

- Reads don't share a bottleneck. Each node reads from its local replica. At our traffic levels this eliminated DB latency spikes during peaks.

- Adding a node is fast. Clone the setup, bring up one of the pre named spare nodes, wait for replication to catch up. About 30 minutes, no DNS change needed.

- DB is network isolated. Nothing reaches the primary except authenticated SSH tunnels that terminate in a hardened sidecar container on the DB host.

- Caddy just works. TLS certs are issued and renewed automatically per node. No shared cert management.

- Simple to debug. SSH into a node, check Caddy, check gunicorn, check replica lag. No orchestration layer to reason about.

- True node independence. Each node has its own Redis. A bad deploy or crash on one node doesn't affect the others at all.

- Multi cloud by default. Nodes can span AWS, Hetzner, or any provider. No provider specific networking required.

- Zero downtime deploys. A webhook triggers docker-rollout on each node sequentially. If a deploy fails on one node, it stops before touching the next. At no point are all nodes restarting simultaneously.

What we are giving up

- Writes have one throat to choke. Primary goes down, writes stop. Reads keep working but write dependent endpoints fail. We've accepted this for now it's an acceptable SPOF at our scale. This is fault tolerant reads, not full HA.

- Replication lag requires discipline. Write-then-read-own-write flows must explicitly use the primary. This is easy to miss during development and worth having a lint rule or test for.

- Mole needs monitoring. A dropped tunnel means that node can't replicate or write until it reconnects.

Restart=alwayshandles most cases within seconds, but we alert on tunnel down events. - Client complexity. Shifting routing responsibility to clients means every client we ship needs to implement the health/latency based routing logic. This is a real maintenance cost.

Open Questions

- What happens to an in flight write if the Mole tunnel drops mid request?

- Django should receive a clean connection error from PostgreSQL and the transaction should roll back. But we haven't run a controlled failure test to confirm there's no edge case where partial state gets left behind. This is the one thing we'd want verified before calling the system production hardened for write critical workloads.

- What does a client see when it reads stale data from a replica?

- It gets an outdated value silently. There's no error, no staleness header, nothing. The API just returns whatever the replica has at that moment. For our read heavy workload this is acceptable, but it means callers have to be aware that reads are eventually consistent. We don't surface this in the response today, and probably should at least document it clearly in the API spec.

- Does a slow tunnel (not a full drop) cause write timeouts?

- We haven't seen this in practice. The tunnel either stays healthy or drops and reconnects quickly. We don't have an explicit

connect_timeoutorstatement_timeoutset on the primary connection beyond Django's defaults, so a persistently slow tunnel would eventually cause gunicorn worker pileups. We haven't hit this, but it's worth adding explicit timeouts as a precaution. - What if replication falls far behind?

- We don't use replication slots, so there's no slot bloat or WAL accumulation risk on the primary. The replica reconnects and catches up from whatever WAL the primary still retains. The risk is that if a replica is down long enough for the primary to have already recycled the WAL segments it needs, the replica would need to be re initialized from a base backup. We haven't set up alerting on replica lag beyond "is it connected." That should be tightened.

- How are the SSH keys managed across providers?

- Each web node has a dedicated key pair. The public key is authorized on the DB node's SSH sidecar, not on the Postgres host/container itself. Key rotation is currently a manual process. This is fine operationally but is the kind of thing that gets forgotten until it matters automated rotation or short lived certificates via something like Vault would be the right answer at higher stakes.

Future Work

- WAL archiving. We currently do hourly

pg_dumpto S3. Our data changes slowly so this RPO is acceptable for now, but continuous WAL archiving with something like wal-g is the right long term answer. - Write SPOF. The primary DB has no standby. Promoting a replica to primary after a failure is a manual process today. Automating this is the next meaningful reliability improvement.

- In flight write behavior under tunnel loss. Needs a controlled failure test to confirm what clients actually experience.

- Replica lag alerting. We alert on tunnel down events but not on lag depth. Adding a lag threshold alert would catch cases where a replica is connected but falling behind.

- Write timeouts. Adding explicit

connect_timeoutandstatement_timeouton the primary connection would bound the blast radius of a degraded tunnel. - Connection pooling. Multiple gunicorn workers across all web nodes open their own connections to the primary. Under higher write load we might add pgbouncer on the DB node to pool and cap those connections and reduce pressure on the primary.

The system handles 220k requests/day on $40/month, has survived node failures, deploys, and a full provider outage without manual intervention, and the only thing that stops writes is the primary DB going down. We're comfortable with that for now. It’s a reasonable, frugal design for our constraints; niche appropriate rather than generally superior to managed HA stacks.

Comments